Apple Unveils M5 Pro and M5 Max Chips: Neural Network Accelerators Deliver 3.6x AI Performance Boost

Apple releases all-new M5 Pro and M5 Max chips featuring Fusion Architecture and neural network accelerators. GPU performance increases by 50%, with local AI model inference up to 3.6x faster.

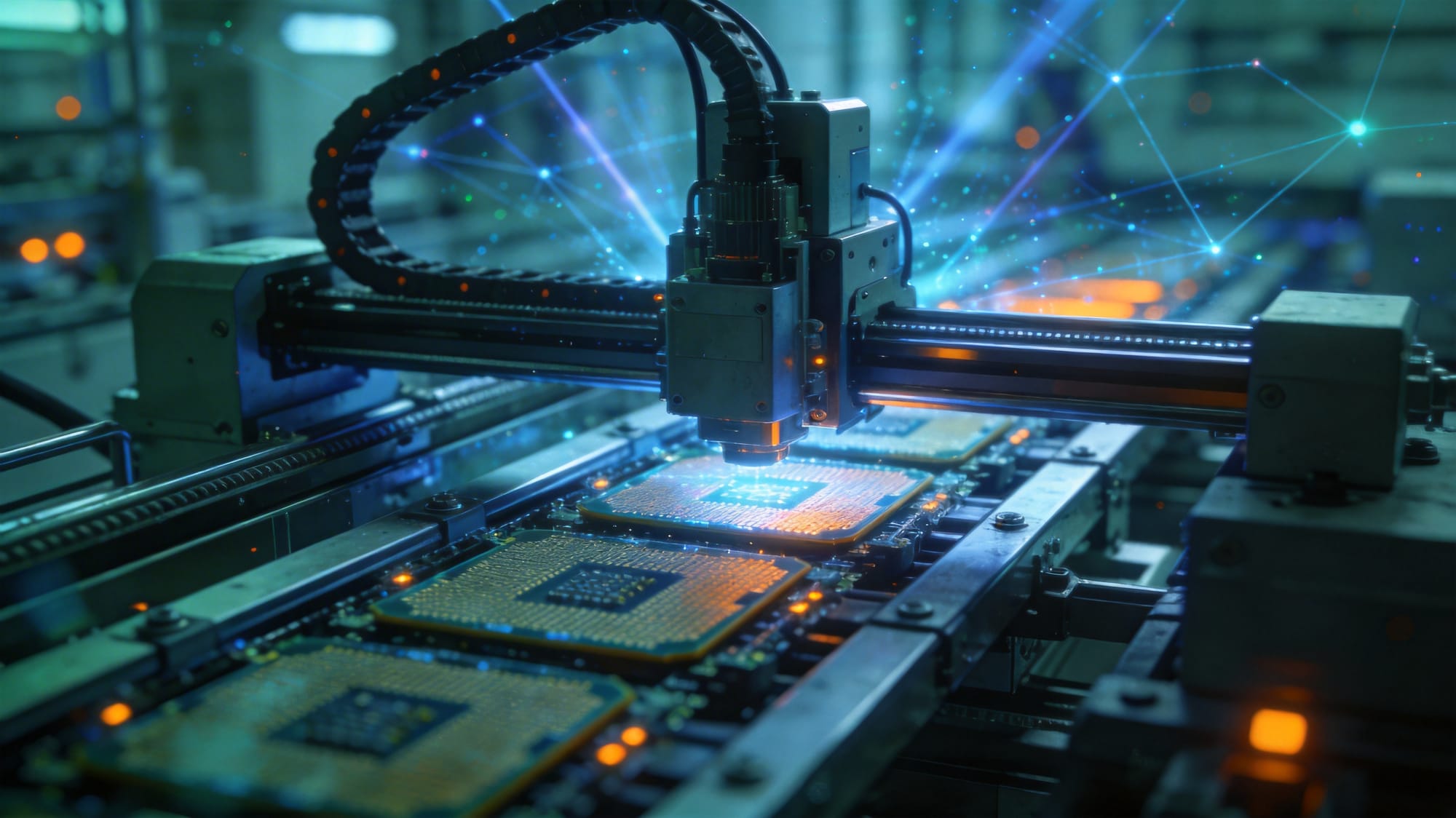

On March 11, 2026, Apple officially released the all-new M5 Pro and M5 Max chips, designed specifically for demanding professional workflows. These chips feature Apple's new Fusion Architecture, engineered from the ground up for AI tasks. Apple claims the new neural engine delivers significantly faster performance, with local AI model inference up to 3.6x faster.

Fusion Architecture: A Major Breakthrough

M5 Pro and M5 Max are the first chips to feature Apple's Fusion Architecture. This innovative design combines two separate dies into a single system-on-a-chip (SoC), delivering "tremendous performance boosts." Apple's Senior Vice President of Hardware Engineering, John Ternus, stated: "Both chips underscore our relentless pace of innovation, integrating the world's fastest CPU cores, a next-generation GPU with Neural Accelerators, a faster Neural Engine, and high-bandwidth, high-capacity memory."

50% GPU Performance Increase

Compared to M4 Pro and M4 Max, M5 Pro and M5 Max deliver up to 50% increase in graphics performance. This improvement enables motion designers to work with complex 3D scenes in real time and VFX artists to preview effects instantly. For professional creators, this means smoother workflows and shorter render wait times.

Major Leap in Neural Engine

The neural engine is the standout feature of the M5 series. Each GPU core now includes neural network accelerators, enabling machine learning inference tasks to run efficiently on-device. Benchmark tests show that M5 chips deliver up to 3.6x faster local inference on specific AI benchmarks compared to the previous generation.

This breakthrough is significant for professionals needing to run AI applications offline. Developers can now run larger language models directly on MacBook Pro without relying on cloud APIs.

Unified Memory and Bandwidth

M5 Pro and M5 Max support higher bandwidth and larger capacity unified memory, providing ample data throughput for AI workloads. This makes running 70B parameter-class AI models locally possible, further blurring the line between mobile devices and professional AI workstations.

Reference: Apple Newsroom, Apple Newsroom