NVIDIA vs AMD 2026 GPU Showdown: Ultimate Comparison for Gaming and AI Performance

The 2026 GPU showdown unfolds with NVIDIA's CUDA ecosystem accelerating AI training 2x faster, while AMD ROCm 7 closes the gap with 432GB HMB3E memory. Which GPU best fits your needs?

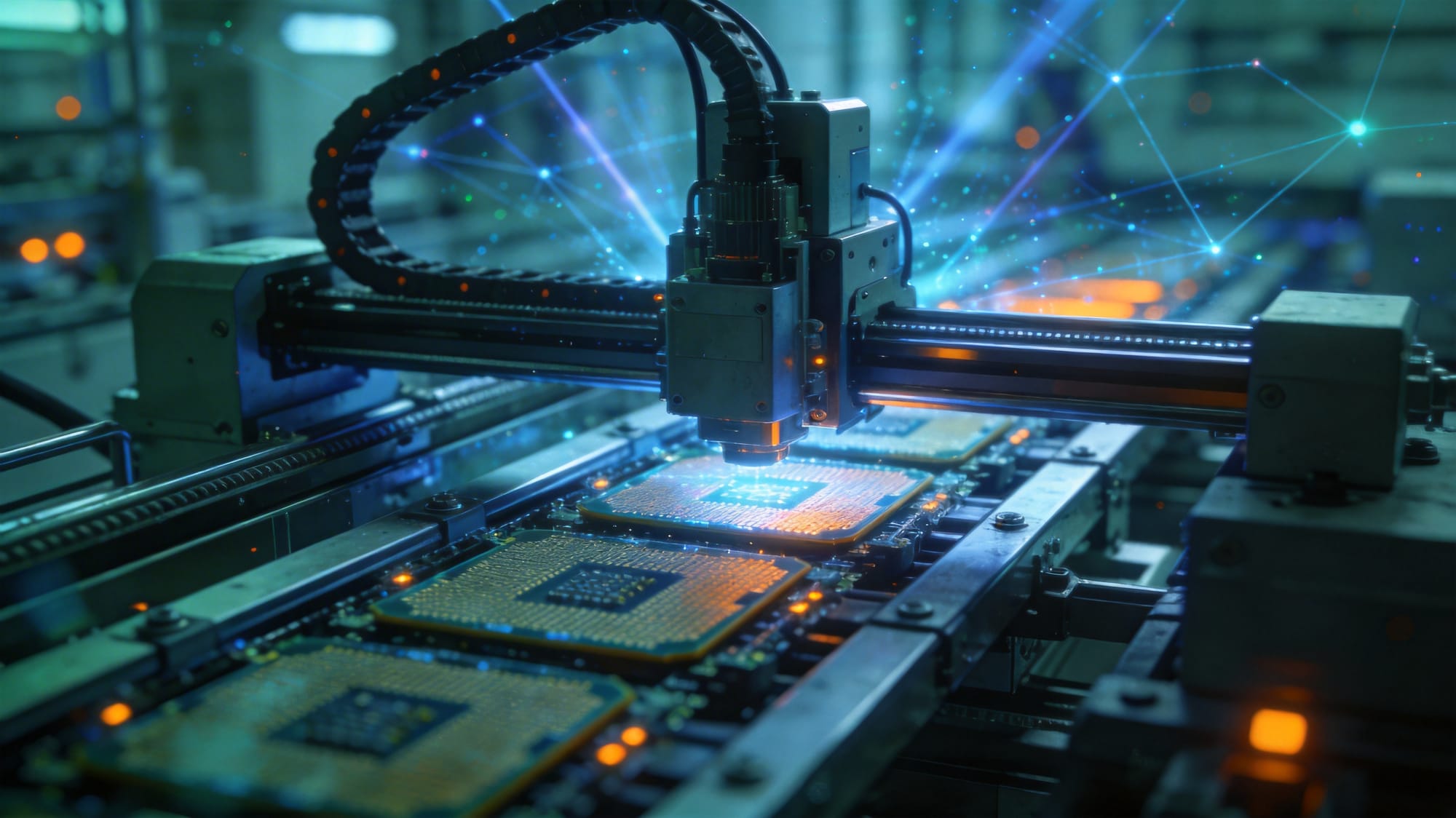

In March 2026, the GPU competition between NVIDIA and AMD enters a new phase. The two chip giants are battling intensely in gaming and AI, with their latest products offering distinct advantages in performance, power consumption, and ecosystem.

AI Training: NVIDIA CUDA Still Leads

According to latest benchmark data, NVIDIA's CUDA ecosystem provides 2x faster AI training compared to AMD. This advantage stems from CUDA's mature ecosystem developed over years, along with its extensive software libraries and toolchain.

NVIDIA's CUDA platform has become the standard for AI research and enterprise deployment. From deep learning frameworks to GPU-accelerated libraries, CUDA offers a complete ecosystem that competitors cannot easily replicate.

AMD ROCm 7 Catching Up

However, AMD isn't giving up on the chase. ROCm 7 with 432GB HBM3E memory is closing the gap with NVIDIA. HBM3E (High Bandwidth Memory 7) provides unprecedented memory bandwidth, making AMD GPUs perform better when handling large-scale AI models.

AMD's ROCm platform has evolved rapidly in recent years, with compatibility continuously improving. For users with limited budgets but needing large VRAM, AMD graphics cards may offer better value.

Gaming:各有千秋

In gaming, both companies' product lines cover different price segments from entry-level to flagship. NVIDIA's DLSS technology, powered by AI-driven super resolution, still leads, while AMD's FSR (FidelityFX Super Resolution) is rapidly iterating.

For players pursuing ultimate gaming experiences, the choice depends on specific games, resolution, and budget.

How to Choose?

AI/Deep Learning: NVIDIA CUDA ecosystem is mature, training is faster

Large Model Inference: AMD 432GB HBM3E VRAM may offer better value

Gaming: Choose based on specific games and budget

Developers/Enterprises: Consider overall ecosystem and long-term support

AI/Deep Learning: NVIDIA CUDA ecosystem is mature, training is faster

Large Model Inference: AMD 432GB HBM3E VRAM may offer better value

Gaming: Choose based on specific games and budget

Developers/Enterprises: Consider overall ecosystem and long-term support

Reference: TechTimes